Why AI Model Updates Break Production Workflows (And the Framework That Prevents It)

You don’t lose a week to one big failure.

You lose it in tiny drags you don’t see coming.

A newer language model appears, benchmarks spike, and everyone swaps it into production.

By Friday, you’re not building. You’re cleaning up after a system that shifted under your feet.

This pattern of AI model updates breaking workflow affects everyone from solo builders to enterprise teams, but the solution isn’t complex.

This post explains why that happens, how it ruins prompt reliability, and workflows relying upon it, and the exact stabilization playbook I use to protect solo builder workflows and enterprise rollouts alike.

Why New AI Models Break Production Workflows

Most people assume the latest model is a strict upgrade.

That assumption breaks real work.

Modern LLMs aren’t single, static models. They’re families of models coordinated by routing layers. Labels look stable. Behavior isn’t. A light tweak to routing, system messaging, or fine-tuning can change tone control, formatting fidelity, or reasoning depth without any visible “version change.”

What this means in practice:

- Yesterday’s concise, neutral answer becomes over-explained today.

- A JSON block acquires a one-line preface that silently breaks a downstream parser.

- Latency shifts enough to slow human review and handoffs.

Individually, these are minor nudges. Cumulatively, they are an implementation breakdown. You don’t notice a single crash. You notice a day gone to rework, a week gone to regression checks, and a month of momentum that never compounds.

If you want a plain-language primer on how these systems behave, skim this first so the rest lands faster and cleaner: AI in simple terms.

The Counter-Intuitive Truth: Strategic Delay Prevents Model Drift

There is a window after any “new model” release where behavior is in motion. Understanding how to manage LLM version changes during this window is crucial for production AI stability. Providers gather feedback, adjust routing, rebalance defaults, and patch edge cases. During that window, prompt reliability is inherently unstable.

The counter-intuitive move that saves time is to wait five to seven days before you stake critical workflows on a new model. Let it settle. Then promote.

Not about being cautious. Rather, refusing to pay the rework tax.

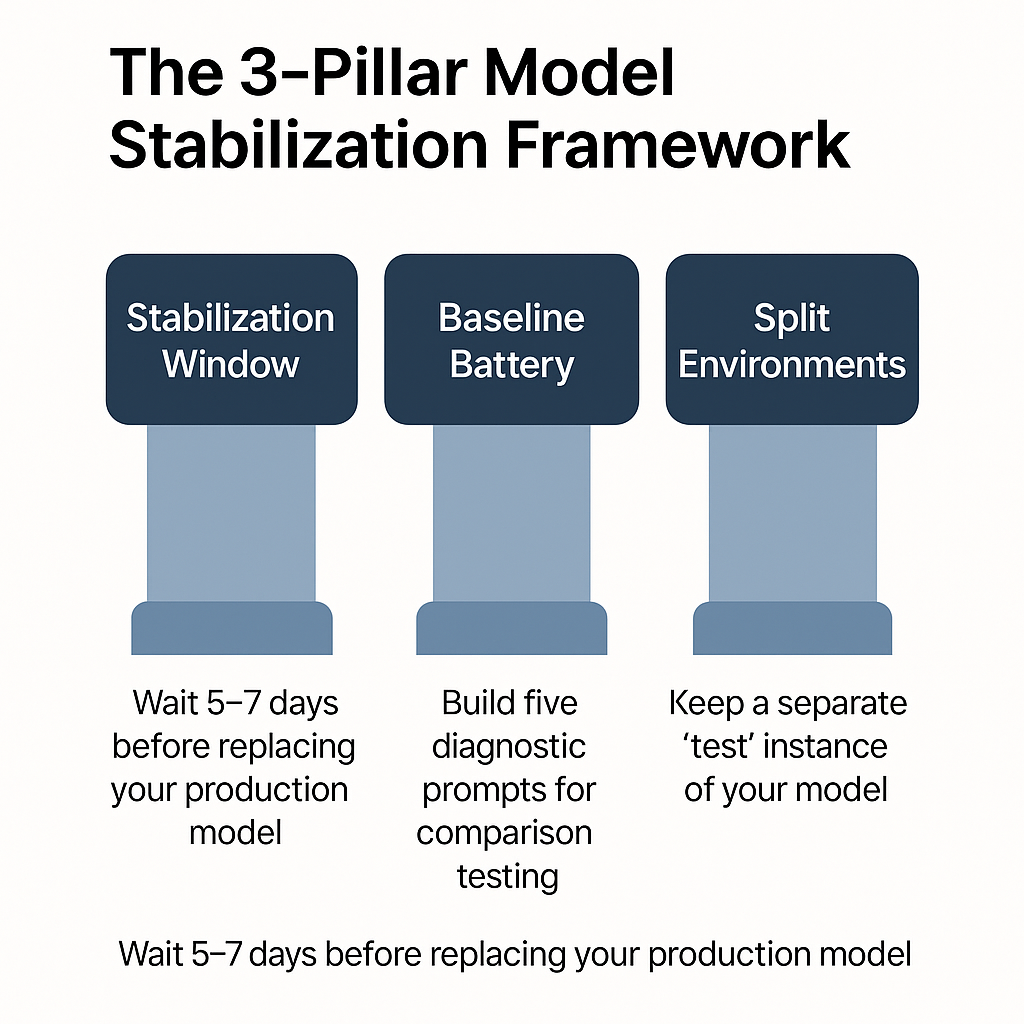

🎯 Key Takeaways: The 3-Pillar Stabilization Framework

- Stabilization Window: Wait 5-7 days after model release

- Baseline Battery: Test 5 core prompt types

- Split Environments: Keep stable and testing separate

The 3-Pillar Model Stabilization Framework

This LLM deployment strategy is simple to explain and strict to follow, serving as both AI model testing protocol and reliability insurance.

- Stabilization Window

- Baseline Battery

- Split Environments

Each removes a specific failure mode that wrecks solo builder workflows and enterprise deployments.

1) Stabilization Window: Let the Dust Settle

What to do

When a new AI model or language model variant appears, do not replace your production model for five to seven days. Use that period to observe behavior while your current workflow keeps shipping.

Why it works

Most routing and system-message changes happen in the first week after release. You want your prompts and workflows to land on something stable, not on a moving target.

Signals to watch

- Tone control becomes consistent across a few days.

- Structured outputs stop drifting.

- Latency variance shrinks.

Solo builder angle

Speed feels like progress. But if your “upgrade” turns your build week into a debug week, you burned the most scarce resource you have. Momentum. If focus is already thin, protect it. A useful companion idea here is to choose fewer fights, on purpose. That’s the core of selective engagement.

2) Building Your 5-Prompt Baseline Battery

A good baseline isn’t a leaderboard score. It’s a small set of prompts that mirror your actual constraints.

LLM prompt reliability depends on systematic testing, not benchmark performance.

As Stanford’s AI training programs emphasize, effective prompt engineering relies on systematic testing and iterative refinement to ensure consistent, reliable outputs across different model configurations.

Build a five-probe battery:

- Tone control

One prompt that must return a concise, neutral answer within a word-count range. - Structure fidelity

One prompt that must return a strict JSON schema with zero prefacing or trailing text. - Format consistency

One prompt that must maintain bullet formatting and section headers across runs. - Reasoning tightness

One prompt that must show clear, stepwise reasoning without verbosity creep. - Error handling

One prompt that must politely refuse or ask for clarification when inputs are ambiguous.

Run the battery daily during the stabilization window. Keep the inputs identical. Save outputs. Compare deltas.

What you’re measuring

Not just accuracy, but prompt reliability: does the language model maintain tone, structure, and length? Do small changes appear and then disappear? Are you seeing pattern stability, not one-off wins?

Enterprise vs solo

- Enterprise: record diffs, annotate breaks, and require sign-off before promotion.

- Solo: a one-page log is enough, but write it. If you don’t record drift, you’ll rationalize it.

3) Split Environment Setup: Known-Stable vs Testing

Why this matters

Most implementation failures are self-inflicted. You “just try the new thing” in your working file or live prompt chain. It behaves differently. Now you’re stuck deciding whether to roll back or fix everything.

How to split

- Known-Stable: your last reliable model and prompts. Ship from here.

- Testing: the new model, your Baseline Battery, and a copy of one or two production prompts.

Promotion requires two conditions:

- The Baseline Battery shows no meaningful drift for at least two consecutive days.

- Your copied production prompts match Known-Stable behavior within your tolerance.

Rollback plan

Write it once, reuse it always. What do you revert, where do you log, who needs to know? Even a solo builder benefits from a simple ritual. If a swap ever goes wrong, run a lightweight postmortem and turn findings into one more pre-flight check. Here’s a concise way to do that: postmortem ritual: the RAFT framework.

Understanding AI Model Drift: What Actually Changes Between Versions

A label like “v2” or “Pro” suggests a fixed object. Behavior suggests otherwise. And worse, most of the time, there are no labels or version numbers changed. However, the internals have dramatically changed in the AI model sphere.

Here are the small moves that cause big cleanup:

- Routing

The provider steers your request to a different sub-model for cost, latency, or task fit. Your tone and length controls wobble. - System messaging

Safety or helpfulness constraints nudge toward longer prefacing, disclaimers, or softened claims. Your output gets wordy. - Fine-tuning layers

Post-release adjustments shift how the model prioritizes brevity versus completeness. Your carefully tuned format starts to creep. - Latency trade-offs

A shift to favor throughput changes human review rhythms. The work still “runs,” but teams slow down.

None of this is dramatic. All of it is costly. This is why the stabilization window exists, and why baseline testing matters more than leaderboard screenshots.

OpenAI’s official deployment guidelines emphasize similar principles, noting that responsible model deployment requires careful monitoring and systematic approaches to handle model behavior changes safely across enterprise environments.

For a refresher on how models learn and why these small shifts appear, this overview is useful context: neural networks, simplified.

Why Solo Builders Lose Weeks to AI Model Updates

The trap looks like this: “If I adopt early, I’ll be faster.”

In reality, you trade predictable progress for unpredictable cleanup.

Most solo builder AI workflows lack the testing infrastructure that enterprise AI deployment teams use.

Early adoption can be wise when the upside is truly outsized for your task. But in most cases, the return on waiting a few days is higher than the return on being first. Your solo builder workflow needs stability more than novelty. Stability compounds. Novelty interrupts.

If you need a practical lens for protecting momentum, use a next-action focus when the environment shifts. It keeps you shipping while the model settles: the Next Step framework.

Mini Enterprise Insight

Across multiple rollouts using prompt engineering best practices, the pattern is the same:

- First-week adopters burn days “fixing” prompts that weren’t broken.

- Mid-cycle adopters get stable performance without the churn.

- The only difference is that the second group treats models like infrastructure, not headlines.

When you apply the same discipline as a one-person startup, you get enterprise-grade outcomes with solo resources. Your prompts last longer. Your automation chains survive updates. Your decision-making looks boring from the outside and powerful from the inside.

Promoting A New Model: A Quick Checklist

Use this short gate before any swap. If you can’t check all three, don’t promote.

- Behavior stable for 2+ days on your Baseline Battery.

- Format fidelity confirmed on a cloned production prompt.

- Rollback written and easy to trigger.

If you ever do promote and then smell drift, don’t argue with it. Roll back, log what changed, and decide if the upside is worth a second attempt after another day of stabilization.

4 Technical Guardrails That Prevent AI Workflow Failures

Add these small defenses to reduce prompting failures and implementation breakdowns:

Schema Validators for JSON Output Reliability

Validate JSON before it reaches downstream steps. If your model adds a preface, the validator catches it.Length Constraints to Force Model Concision

Post-process outputs to a target range. Force concision when the model tries to be helpful.Refusal Logic: Teaching AI When to Say “I Don’t Know”

Teach your assistant to ask clarifying questions when uncertainty is above a threshold rather than hallucinating confidence.Output diffing

Automatically compare new outputs to a golden set and flag material changes in tone or structure.These aren’t complex. They are boring on purpose. Boring is what makes prompt reliability compound.

Headline Advice vs. Implementation Reality

Leaderboards and demo threads are not your workflow.

Your work lives in the gap between “can” and “does,” between one-off success and repeatable reliability.

The stabilization playbook closes that gap:

- Wait a short window.

- Test what you actually depend on.

- Keep testing separate from shipping.

This is how you build an AI model adoption strategy that scales down to a one-person startup and up to a large team, without changing principles.

Enterprise vs Solo: Scaling the Stabilization Framework

Recap: The Contrarian Truth

The fastest way to move fast is to slow down at the right time.

Delay promotion. Measure behavior. Split environments.

You’ll ship more, fix less, and protect the one asset every builder needs most: momentum.

If you want a dead-simple checklist to run after any swap that still goes wrong, use this lightweight postmortem and turn it into a one-line safeguard for next time. It’s short, and it works: the RAFT framework.

And if you need a steady way to keep moving when everything changes around you, start here and make your next action obvious in five minutes: the Next Step framework.

P.S. I share what I’ve lived, built, or deeply studied – never summaries or theory.** Join builders who refuse to waste time on AI hype and broken workflows at rezamotaghi.substack.com.

Frequently Asked Questions

What is a Model Stabilization Framework?

A Model Stabilization Framework is a set of practices used to systematically test and delay the adoption of new AI models, ensuring they are reliable and stable before being integrated into production workflows.

What is “model drift” and why does it matter?

Model drift refers to the small, unpredictable changes in an AI model’s behavior that occur over time or with a version update. It matters because it can silently break downstream systems, affect prompt reliability, and lead to costly rework.

How long should I wait before adopting a new AI model?

The recommended “Stabilization Window” is five to seven days. This period allows the provider to finalize any initial tweaks to routing or fine-tuning, giving you a more stable target for your prompts and workflows.

How does this framework help with prompt reliability?

By using a “Baseline Battery” of fixed prompts, the framework allows you to measure and compare outputs from a new model against a known-stable version. This confirms that the model maintains consistent tone, structure, and reasoning, which is the core of prompt reliability.

Is this framework only for large enterprises?

No. The framework is designed to scale from a single developer (“solo builder”) to large teams. For a solo builder, it is even more critical to protect momentum and avoid the “false economy” of early adoption that leads to unpredictable cleanup.